|

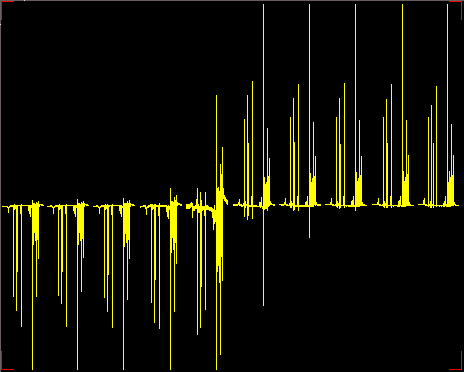

Hello, I wanted to ask this in a while. Finally a sample arrived that shows this behavior and I made a screenshot (below). It's a 90o pulse calibration - using zero crossing near 360o pulse in standard one pulse FT experiment (arrayed pulse width at constant transmitter power). Normally I expect a sine-wave-like picture as pulse width changes. Instead here there is a "binary" type of pattern - peak goes from all the way down then almost immediately all the way up. I've verified that there is no transmitter overflow - I've set receiver gain sufficiently low. Here step is at about 10o flip angle. What's the physical explanation to this?

|

|

It looks like you've got "auto scaling" turned on. The individual spectra are being re-sized vertically so that each one extends to the top or bottom of the display. Look at the spectra next to the middle one: the noise and artifacts are larger than the rest of them. BTW, it is often more convenient to search for a 2pi (360 deg) pulse length rather than the pi (180 deg) pulse length because of it permits shorter recovery time. When the spins are closer to their equilibrium magnetization it isn't necessary to wait as long for equilibration. Spins that have been rotated by approximately pi radians takes the longest to recover equilibrium magnetization. Hope this all helps. Joseph yep. Thanks, looks like that's the case. How to turn off auto-scaling? Just looked through the manual found vsadj and some similar commands, but no way to turn it off. - Evgeny Fadeev (Apr 16 '10 at 10:54) Type ai to turn off that scaling and nm to restore it - Adolfo Botana (Apr 24 '10 at 06:15) |